The cr.yp.to blog

Table of contents (Access-I for index page)

| 2026.04.05: NSA and IETF, part 7: Counting votes. #pqcrypto #hybrids #nsa #ietf #voting |

| 2026.02.21: NSA and IETF, part 6: The structure of the debate. #pqcrypto #hybrids #nsa #ietf #chart |

| 2026.02.19: NSA and IETF, part 5: One battle after another. #pqcrypto #hybrids #nsa #ietf #lastcall |

| 2025.11.23: NSA and IETF, part 4: An example of censored dissent. #pqcrypto #hybrids #nsa #ietf #scope |

| 2025.11.23: NSA and IETF, part 3: Dodging the issues at hand. #pqcrypto #hybrids #nsa #ietf #dodging |

| 2025.11.23: NSA and IETF, part 2: Corruption continues. #pqcrypto #hybrids #nsa #ietf #corruption |

| 2025.10.05: MODPOD: The collapse of IETF's protections for dissent. #ietf #objections #censorship #hybrids |

| 2025.10.04: NSA and IETF: Can an attacker simply purchase standardization of weakened cryptography? #pqcrypto #hybrids #nsa #ietf #antitrust |

| 2025.09.30: Surreptitious surveillance: On the importance of not being seen. #marketing #stealth #nsa |

| 2025.04.23: McEliece standardization: Looking at what's happening, and analyzing rationales. #nist #iso #deployment #performance #security |

| 2025.01.18: As expensive as a plane flight: Looking at some claims that quantum computers won't work. #quantum #energy #variables #errors #rsa #secrecy |

| 2024.10.28: The sins of the 90s: Questioning a puzzling claim about mass surveillance. #attackers #governments #corporations #surveillance #cryptowars |

| 2024.08.03: Clang vs. Clang: You're making Clang angry. You wouldn't like Clang when it's angry. #compilers #optimization #bugs #timing #security #codescans |

| 2024.06.12: Bibliography keys: It's as easy as [1], [2], [3]. #bibliographies #citations #bibtex #votemanipulation #paperwriting |

| 2024.01.02: Double encryption: Analyzing the NSA/GCHQ arguments against hybrids. #nsa #quantification #risks #complexity #costs |

| 2023.11.25: Another way to botch the security analysis of Kyber-512: Responding to a recent blog post. #nist #uncertainty #errorbars #quantification |

| 2023.10.23: Reducing "gate" counts for Kyber-512: Two algorithm analyses, from first principles, contradicting NIST's calculation. #xor #popcount #gates #memory #clumping |

| 2023.10.03: The inability to count correctly: Debunking NIST's calculation of the Kyber-512 security level. #nist #addition #multiplication #ntru #kyber #fiasco |

| 2023.06.09: Turbo Boost: How to perpetuate security problems. #overclocking #performancehype #power #timing #hertzbleed #riskmanagement #environment |

| 2022.08.05: NSA, NIST, and post-quantum cryptography: Announcing my second lawsuit against the U.S. government. #nsa #nist #des #dsa #dualec #sigintenablingproject #nistpqc #foia |

| 2022.01.29: Plagiarism as a patent amplifier: Understanding the delayed rollout of post-quantum cryptography. #pqcrypto #patents #ntru #lpr #ding #peikert #newhope |

| 2020.12.06: Optimizing for the wrong metric, part 1: Microsoft Word: Review of "An Efficiency Comparison of Document Preparation Systems Used in Academic Research and Development" by Knauff and Nejasmic. #latex #word #efficiency #metrics |

| 2019.10.24: Why EdDSA held up better than ECDSA against Minerva: Cryptosystem designers successfully predicting, and protecting against, implementation failures. #ecdsa #eddsa #hnp #lwe #bleichenbacher #bkw |

| 2019.04.30: An introduction to vectorization: Understanding one of the most important changes in the high-speed-software ecosystem. #vectorization #sse #avx #avx512 #antivectors |

| 2017.11.05: Reconstructing ROCA: A case study of how quickly an attack can be developed from a limited disclosure. #infineon #roca #rsa |

| 2017.10.17: Quantum algorithms to find collisions: Analysis of several algorithms for the collision problem, and for the related multi-target preimage problem. #collision #preimage #pqcrypto |

| 2017.07.23: Fast-key-erasure random-number generators: An effort to clean up several messes simultaneously. #rng #forwardsecrecy #urandom #cascade #hmac #rekeying #proofs |

| 2017.07.19: Benchmarking post-quantum cryptography: News regarding the SUPERCOP benchmarking system, and more recommendations to NIST. #benchmarking #supercop #nist #pqcrypto |

| 2016.10.30: Some challenges in post-quantum standardization: My comments to NIST on the first draft of their call for submissions. #standardization #nist #pqcrypto |

| 2016.06.07: The death of due process: A few notes on technology-fueled normalization of lynch mobs targeting both the accuser and the accused. #ethics #crime #punishment |

| 2016.05.16: Security fraud in Europe's "Quantum Manifesto": How quantum cryptographers are stealing a quarter of a billion Euros from the European Commission. #qkd #quantumcrypto #quantummanifesto |

| 2016.03.15: Thomas Jefferson and Apple versus the FBI: Can the government censor how-to books? What if some of the readers are criminals? What if the books can be understood by a computer? An introduction to freedom of speech for software publishers. #censorship #firstamendment #instructions #software #encryption |

| 2015.11.20: Break a dozen secret keys, get a million more for free: Batch attacks are often much more cost-effective than single-target attacks. #batching #economics #keysizes #aes #ecc #rsa #dh #logjam |

| 2015.03.14: The death of optimizing compilers: Abstract of my tutorial at ETAPS 2015. #etaps #compilers #cpuevolution #hotspots #optimization #domainspecific #returnofthejedi |

| 2015.02.18: Follow-You Printing: How Equitrac's marketing department misrepresents and interferes with your work. #equitrac #followyouprinting #dilbert #officespaceprinter |

| 2014.06.02: The Saber cluster: How we built a cluster capable of computing 3000000000000000000000 multiplications per year for just 50000 EUR. #nvidia #linux #howto |

| 2014.05.17: Some small suggestions for the Intel instruction set: Low-cost changes to CPU architecture would make cryptography much safer and much faster. #constanttimecommitment #vmul53 #vcarry #pipelinedocumentation |

| 2014.04.11: NIST's cryptographic standardization process: The first step towards improvement is to admit previous failures. #standardization #nist #des #dsa #dualec #nsa |

| 2014.03.23: How to design an elliptic-curve signature system: There are many choices of elliptic-curve signature systems. The standard choice, ECDSA, is reasonable if you don't care about simplicity, speed, and security. #signatures #ecc #elgamal #schnorr #ecdsa #eddsa #ed25519 |

| 2014.02.13: A subfield-logarithm attack against ideal lattices: Computational algebraic number theory tackles lattice-based cryptography. |

| 2014.02.05: Entropy Attacks! The conventional wisdom says that hash outputs can't be controlled; the conventional wisdom is simply wrong. |

2016.03.15: Thomas Jefferson and Apple versus the FBI: Can the government censor how-to books? What if some of the readers are criminals? What if the books can be understood by a computer? An introduction to freedom of speech for software publishers. #censorship #firstamendment #instructions #software #encryption

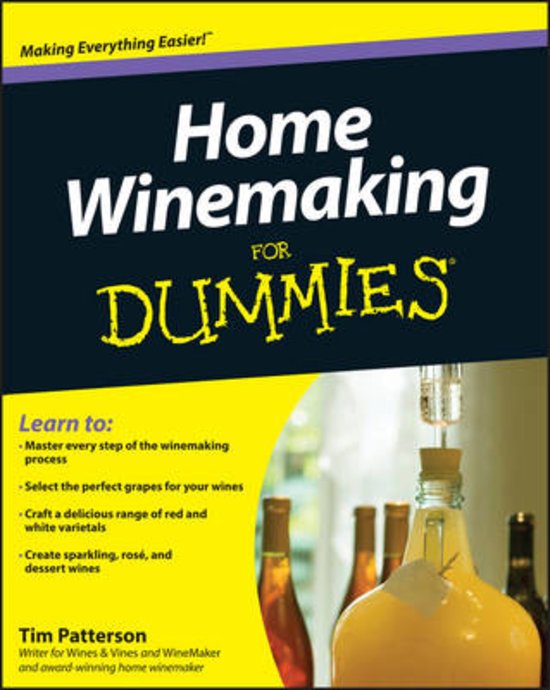

When Prohibition began,

anti-alcohol crusaders demanded

that the St. Louis Public Library burn every book

that explained "the home production of alcohol for drinks".

The librarian insisted on keeping the books

but agreed to hide them from the "general public".

When Prohibition began,

anti-alcohol crusaders demanded

that the St. Louis Public Library burn every book

that explained "the home production of alcohol for drinks".

The librarian insisted on keeping the books

but agreed to hide them from the "general public".

Instructions have always been a target of censorship.

The censors say that it's bad, or that it might be bad,

if people follow the instructions.

Censorship of other types of information almost always follows this pattern:

the censors say that

information must be banned because of

what might happen when people receive and act upon the information.

However,

centuries of experience have taught us

that the benefits of free speech

are vastly larger than the benefits of censorship.

This judgment is enshrined in the First Amendment.

Narrow, carefully defined exceptions

A few well-established categories of communication

are so clearly undesirable for society that they don't have First Amendment protection.

For example,

the First Amendment doesn't protect

intentional solicitation of criminal activity:

"I'll pay you $1000 to steal that car for me."

The Supreme Court is careful to define these categories narrowly,

so that the categories don't threaten free speech.

For example,

imagine someone saying

"To discourage terrorism we should torture suspected terrorists and behead terrorists' families."

Can the government throw the speaker in jail for advocating violence?

Answer:

The speaker is protected if he isn't advocating

imminent lawless action.

Society has time to calmly discuss, and dismiss, the speaker's bad ideas.

As another example,

the First Amendment usually doesn't protect false information.

Contract law limits your freedom to make false promises.

Fraud law limits your freedom to deceive people for profit.

However,

publishers would be terrified to say anything

if they were exposed to libel lawsuits for innocent mistakes.

That's why

incorrect statements about public figures

are protected by the First Amendment unless they're made with

reckless disregard for the truth.

Libraries stay open even though they might help criminals

In Tom Clancy's 1994 novel Debt of Honor,

a terrorist pilot armed with a knife

seized control of an airplane loaded with fuel

and flew the airplane into the United States Capitol.

It's easy to respond emotionally to this:

obviously 9/11 was Clancy's fault;

all books describing violence or other crime should be banned.

It's easy to respond emotionally to this:

obviously 9/11 was Clancy's fault;

all books describing violence or other crime should be banned.

Eventually common sense kicks in.

Burning books isn't going to stop terrorists and other criminals from coming up with their plots.

On the contrary:

the bad guys are perfectly capable of spotting security weaknesses on their own.

Public discussion of security weaknesses

has always been

society's most effective tool for getting those weaknesses fixed.

Instructions that are specifically intended to aid criminals

do lose First Amendment protection.

For example,

the First Amendment doesn't protect

a

murder-manual publisher

who "intended to provide assistance to murderers and would-be murderers".

But criminal intent is critical here.

The government can't censor the Clancy books.

It can't censor the Bond films.

It can't censor a

"Build Your Own Secret Bookcase Door" book,

even though some readers might be criminals hiding from the police.

It can't censor a "How to Fish" book,

even though some readers might be terrorists who don't deserve to eat

and who would be more easily caught if they were starving.

Demystifying software

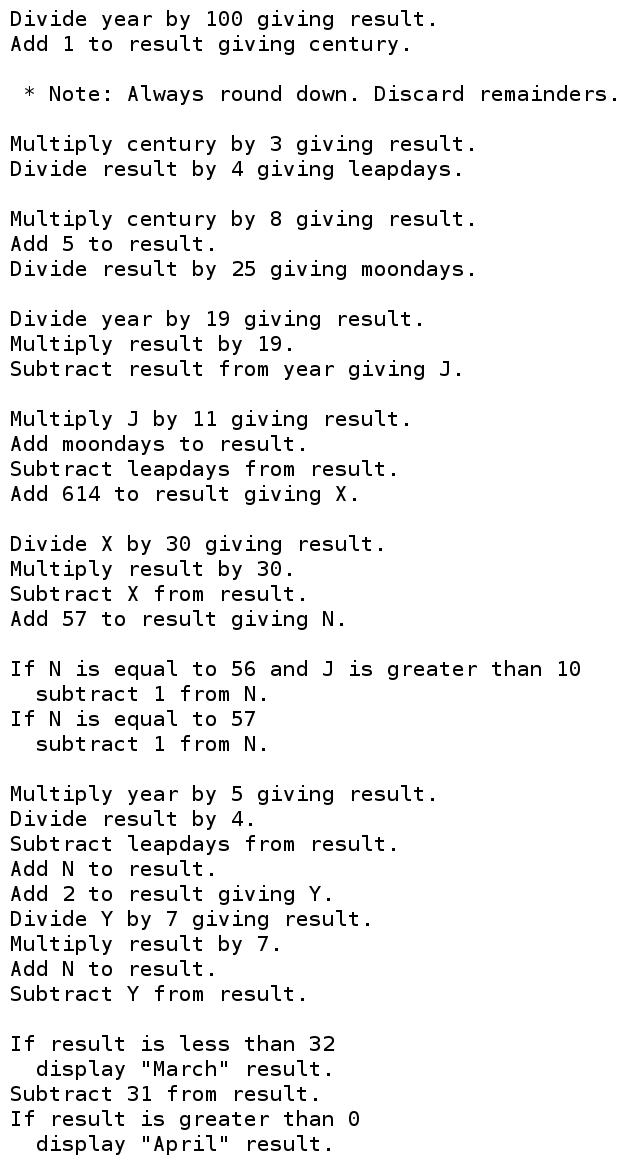

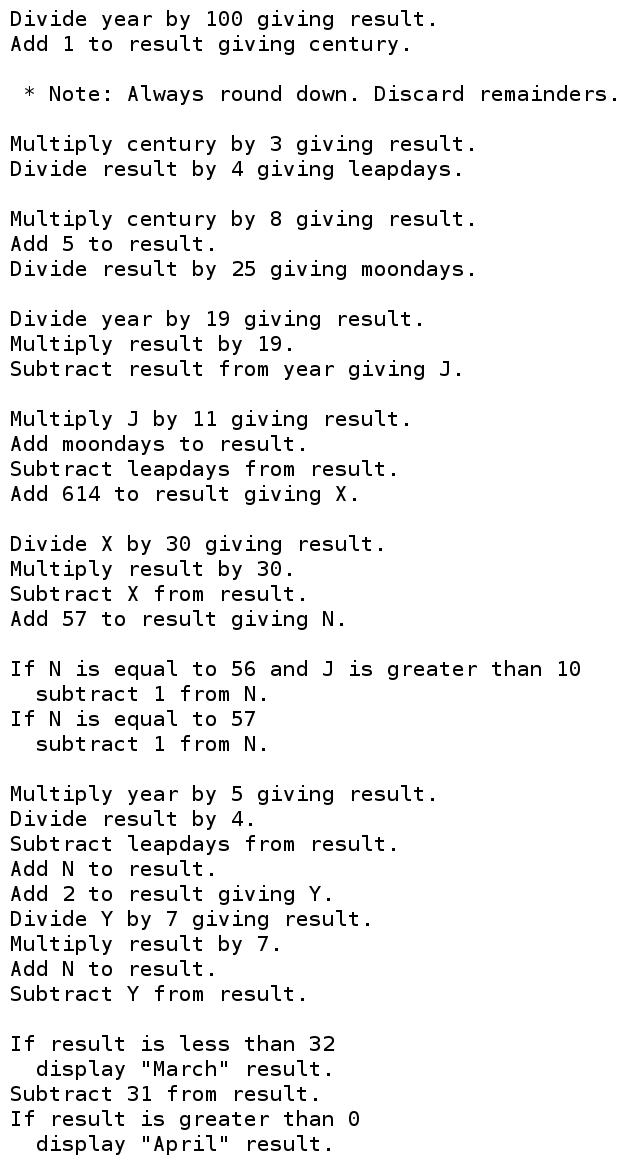

"Divide year by 100 giving result. Add 1 to result giving century."

These two sentences are the start of a few dozen instructions for

calculating the date of Easter.

You can understand these instructions.

These instructions are software;

this means that a computer can understand them too.

These instructions are software;

this means that a computer can understand them too.

Our computers are extensions of our brains.

They're often faster and more reliable than our brains are.

That's why we tell our computers to run software,

rather than following the same instructions by hand.

That's also why we delegate to these devices our memories,

the intimate details of our lives.

Have you heard the government arguing that it wants new powers to censor software?

Think about what happens when you remove the computer from the picture.

Could the government state the same rationale

for censoring instructions followed by people?

Does the First Amendment protect publication of the instructions despite this rationale?

Usually the answer to both questions is yes.

Let's try an example.

Apple is providing software for millions of people to encrypt their private files.

If this software is doing its job then nobody else can understand the files.

But a few of the people protected by this are criminals.

The government claims that it's "going dark".

Should Apple be allowed to publish this software?

Let's remove the computer from the picture.

Fact:

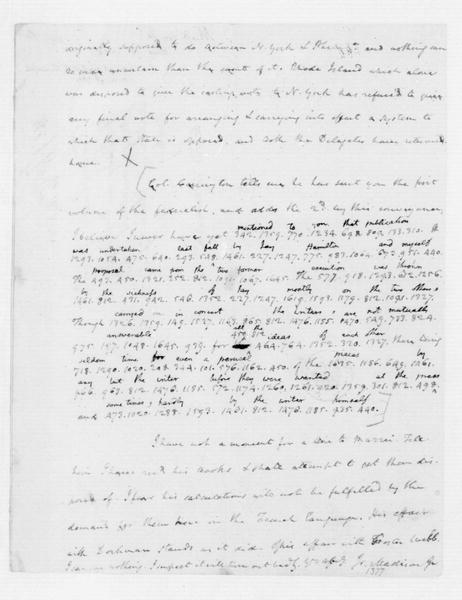

Thomas Jefferson was a cryptographer.

He distributed instructions that

James Madison used, by hand, to encrypt private files

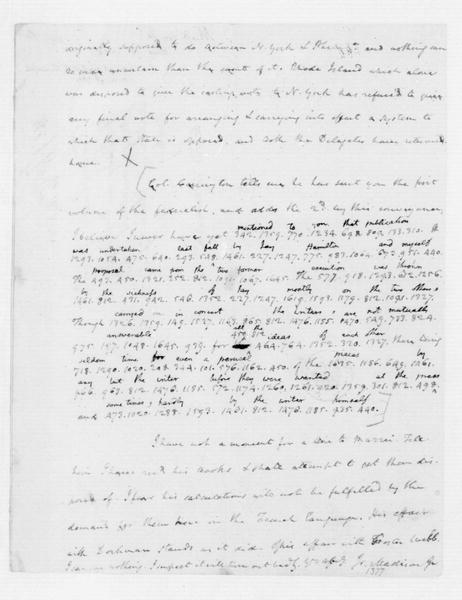

(such as the

partly encrypted letter back to Jefferson

shown on the left).

The effect of the encryption was that nobody else could understand the files.

Let's remove the computer from the picture.

Fact:

Thomas Jefferson was a cryptographer.

He distributed instructions that

James Madison used, by hand, to encrypt private files

(such as the

partly encrypted letter back to Jefferson

shown on the left).

The effect of the encryption was that nobody else could understand the files.

Should a modern-day Jefferson be allowed to publish a "How to Encrypt" book?

What if the FBI says that Jefferson is helping criminals?

Answer:

The publisher is fully protected by the First Amendment.

The publisher doesn't intend to help criminals.

Sure,

publishing encryption instructions

might occasionally help criminals,

but it does

much more to stop criminals

and to

protect human rights.

Exceptions, revisited

If I were a lawyer trying to scare courts

into creating a First Amendment exception for software,

what would I do?

Answer: I would talk about bad software.

Imagine software for destroying navigational systems on airplanes.

Imagine a malware app that pretends to be a legitimate app for buying stocks

but that, when you run it, ends up giving your money to a thief.

Clearly the government needs to be able to make laws regarding software!

To demystify these examples,

let's eliminate the computer.

Imagine a book called "How to Destroy Navigational Systems on Airplanes".

The courts already know how to handle this:

intentional "aiding and abetting" of criminal activity

isn't protected by the First Amendment.

It doesn't matter whether the criminal who destroyed the navigational system

was following instructions by hand,

or was having his computer follow instructions on his behalf.

Or imagine a book that pretends to be a legitimate how-to book on buying stocks

but that, when you follow its instructions, ends up giving your money to a thief.

The courts know how to handle this too:

fraud isn't protected by the First Amendment.

The computer is again irrelevant.

So there's no real argument for

creating a First Amendment exception for software.

There is, meanwhile, a very strong argument against

creating a First Amendment exception for software:

namely, this exception would end up swallowing the entire First Amendment

as computers learn to understand more and more types of communication.

As

the Ninth Circuit Court of Appeals wrote

in 1999:

The distinction urged on us by the government would prove

too much in this era of rapidly evolving computer capabilities.

The fact that computers will soon be able to respond directly

to spoken commands, for example, should not confer on the

government the unfettered power to impose prior restraints on

speech in an effort to control its "functional" aspects.

The crypto wars: early history

For decades the FBI and NSA

have been trying and failing to convince Congress to outlaw strong encryption.

Their arguments have always been the same:

terrorism, drug dealers, terrorism, child pornography, terrorism, etc.

Congress has always paid more attention to the counterarguments:

most importantly,

strong encryption will

still be available for the terrorists,

while efforts to weaken encryption are a

security disaster for the rest of us.

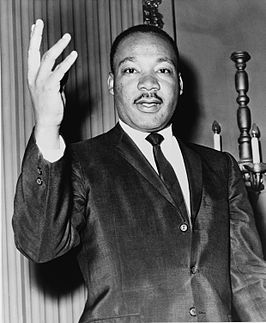

The FBI and NSA

have also undermined their positions

by establishing a track record of abusing their power:

spying on their own love interests,

for example,

and

spying on civil-rights leaders.

For decades the FBI and NSA

have been trying and failing to convince Congress to outlaw strong encryption.

Their arguments have always been the same:

terrorism, drug dealers, terrorism, child pornography, terrorism, etc.

Congress has always paid more attention to the counterarguments:

most importantly,

strong encryption will

still be available for the terrorists,

while efforts to weaken encryption are a

security disaster for the rest of us.

The FBI and NSA

have also undermined their positions

by establishing a track record of abusing their power:

spying on their own love interests,

for example,

and

spying on civil-rights leaders.

What do you do if you're a spy and Congress isn't doing what you want?

You take matters into your own hands!

The NSA convinced the State Department

to declare that encryption software was a "munition"

under the "Arms Export Control Act",

and that disclosing encryption software to a foreigner without a license

was an illegal "export".

The government's

stated goal

was to

"control the widespread foreign availability

of cryptographic devices and software

which might hinder its foreign intelligence collection efforts".

Let's again imagine this without the computer.

The State Department declares that modern-day Jefferson's how-to book on encryption is a "munition"

under the Arms Export Control Act,

and that disclosing Jefferson's instructions to a foreigner without a license

is an illegal "export".

The government's goal is to "control the widespread foreign availability"

of encryption instructions

"which might hinder its foreign intelligence collection efforts".

The computer is once again irrelevant.

This isn't just a hypothetical analogy.

For many years the government

claimed that encryption instructions for humans

were controlled by the Arms Export Control Act.

For example,

in 1977, NSA employee Joseph Meyer

told the organizers of a cryptographic conference that they would be

subject to prosecution.

In 1993 the State Department officially classified

a piece of cryptographic software and a cryptographic paper as "munitions".

Two years later,

after being dragged into court,

the State Department changed its mind regarding the paper,

an incident that the judge called

"disquieting".

Fundamentally,

the FBI and NSA would like to censor books and papers

for the same reasons that they would like to censor software.

The only reason that the government gave up on censoring books and papers

was in an attempt to avoid First Amendment scrutiny:

everyone can see that books and papers are covered by the First Amendment,

whereas most people find software to be something mysterious and incomprehensible.

First Amendment protection for encryption software

Back to the court case.

The judge focused on software,

and found that the government's regulations were an

"unconstitutional prior restraint in violation of the First Amendment".

The government had argued that software doesn't qualify for First Amendment protection,

but the judge found that

"source code is speech":

Contrary to defendants' suggestion,

the functionality of a language does not make it any less like speech. ...

Instructions, do-it-yourself manuals, recipes,

even technical information about hydrogen bomb construction,

see United States v. The Progressive. Inc.,

467 F. Supp. 990 (W.D. Wisc. 1979), are often purely functional;

they are also speech. ...

The music inscribed in code

on the roll of a player piano

is no less protected for being wholly functional.

Like source code converted to object code,

it "communicates" to and directs the instrument itself,

rather than the musician, to produce the music.

That does not mean it is not speech.

Like music and mathematical equations,

computer language is just that, language,

and it communicates information either to a computer or to those who can read it.

Defendants argue in their reply

that a description of software in English informs the intellect

but source code actually allows someone to encrypt data.

Defendants appear to insist

that the higher the utility value of speech the less like speech it is.

An extension of that argument

assumes that once language allows one to actually do something,

like play music or make lasagne, the language is no longer speech.

The logic of this proposition is dubious at best.

Its support in First Amendment law is nonexistent.

In response,

the NSA convinced the Department of Commerce

to set up suspiciously similar new regulations

under the "International Emergency Economic Powers Act".

The judge found that these new regulations were

also unconstitutional.

The government appealed to the Ninth Circuit Court of Appeals,

which again found that the regulations were

unconstitutional.

A side comment from the court

recognized the dawn of a golden age of surveillance:

In this increasingly electronic age,

we are all required in our everyday lives

to rely on modern technology to communicate with one another.

This reliance on electronic communication, however,

has brought with it a dramatic diminution in our ability to communicate privately.

Cellular phones are subject to monitoring,

email is easily intercepted,

and transactions over the internet are often less than secure.

Something as commonplace as

furnishing our credit card number, social security number, or bank account number

puts each of us at risk.

Moreover, when we employ electronic methods of communication,

we often leave electronic "fingerprints" behind,

fingerprints that can be traced back to us.

Whether we are surveilled by our government, by criminals, or by our neighbors,

it is fair to say that never has our ability

to shield our affairs from prying eyes been at such a low ebb.

In another case against the same regulations,

the Sixth Circuit Court of Appeals also found that the regulations were

unconstitutional.

The Apple-FBI case

Fast forward to 2016.

The FBI has found another way to apply pressure to software publishers:

"You provided software that was used to encrypt this data.

The All Writs Act says that you have to help us decrypt it!"

Fast forward to 2016.

The FBI has found another way to apply pressure to software publishers:

"You provided software that was used to encrypt this data.

The All Writs Act says that you have to help us decrypt it!"

The FBI is, for example,

trying to use the All Writs Act to conscript Apple into

- writing anti-encryption software

to be run on Syed Rizwan Farook's work-issued iPhone

and

- falsely signing that software as being legitimate.

When I say "anti-encryption",

what I mean is that if this new software works

then maybe the FBI will be able to understand the encrypted files stored on that iPhone.

Nobody expects this iPhone to actually have any files of interest

(Farook also owned, and destroyed, a personal iPhone),

but other law-enforcement agencies have expressed their eagerness

to use the same software against drug dealers.

Let's imagine the same scenario without computers.

Jefferson's "How to Encrypt" instructions

are being used by Madison and many other innocent people,

but they're also being used by evil Farook.

As part of a sting operation against Farook,

the FBI is demanding that Jefferson

-

write new anti-encryption instructions

and

-

falsely sign those instructions as being legitimate.

The FBI hopes that Farook,

seeing Jefferson's signature and not realizing what's going on,

will be fooled into following the anti-encryption instructions,

so maybe the FBI will be able to understand Farook's files.

Actually, everyone expects that Farook already destroyed all of the interesting files,

but other law-enforcement agencies are eager to reuse Jefferson's false signature

against drug dealers.

Jefferson, however, doesn't want to write or sign these anti-encryption instructions.

He considers these instructions

"too dangerous to create".

Is Jefferson free to say what he wants

if what he wants to say is nothing at all?

The Supreme Court says yes:

freedom of speech includes

"both what to say and what not to say".

There are some exceptions,

but the exceptions are very far from the Jefferson case.

To summarize,

Jefferson has a First Amendment right to avoid writing, and to avoid signing,

the instructions demanded by the government.

Apple has the same First Amendment right.

The distinction between software and other instructions isn't relevant

to the free-speech analysis.

My guess is that courts will say no to the FBI interpretation of the All Writs Act,

without reaching the First Amendment issues.

But this obviously won't be the end of

government efforts to control software publishers.

Fortunately, just like the publishers of how-to books,

software publishers are protected by the First Amendment.

[2022.01.09 update: Updated links above.]

Version:

This is version 2022.01.09 of the 20160315-jefferson.html web page.

When Prohibition began,

anti-alcohol crusaders demanded

that the St. Louis Public Library burn every book

that explained "the home production of alcohol for drinks".

The librarian insisted on keeping the books

but agreed to hide them from the "general public".

When Prohibition began,

anti-alcohol crusaders demanded

that the St. Louis Public Library burn every book

that explained "the home production of alcohol for drinks".

The librarian insisted on keeping the books

but agreed to hide them from the "general public".